Good idea ))

I would use an adversarial neural network to generate a human face ))

Good idea ))

I would use an adversarial neural network to generate a human face ))

One only needs to ask, Tenzorum released an easy way to associate an ENS subdomain to an address:

indeed, that works like a charm 16 words of already existing standard list multi-lang. a small online javascript gadget will definitely be useful, and the fact that it’s 16 words and Ethereum address has no checksum (but for case-sensitivity) we could add 4 bytes or 1 -2 words checksum later!

tell me more about that…

isn’t just as ENS now subdomains of https://enslisting.com ?

If you prefer a checksum, then I think evenness bits are not necessary, so we can keep 16 words length with a 16 bit checksum. I’m not sure even that is necessary for our use-case of phrase verification, it’s just a way to create some redundancy.

I believe all/majority of wallets/dApps will use name systems (such as ENS) in the future. That’s what we’re all used to, so I guess that’s the best choice if we want mass adoption.

However, your idea is interesting and I guess it could be worth exploring (along with similar ideas) for some alternative use cases. For example, crypto community is very inclusive and open (one of the reasons I like it so much), so it would be awesome if we have good UX solutions in place for people with disabilities, too.

Yes, it’s an easy way to get an ENS now subdomain. It will help solve the ‘over the phone’ issue and is easy to manually check for correctness. So this a great solution to many of the problems we associate with public keys.

Many people I know have an @gmail or @icloud email address and do not buy their own domain. So it is similar to the user experience they are familiar with.

Oh yeah, I forgot we’re using 2 lists pgp style for error redundancy, IMO a proper checksum is defiantly a better than 2x tables. and this application could work universally across crypto addresses most Hex or encoding of hex, we add then base58check >> hash140 and would instantly work for bitcoin.

This might be the safest way to transmit a wallet address over the phone today with 16 words +distinguishable +checksum!

Great idea, I looked up the subject there is 8% of men with color blindness!

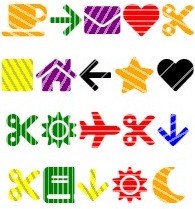

I’ve just added a Dictionary that supports color-blindless, and it dosn’t look bad at all!

ex. random 0xbdd8fe2a864cfa165b1266649996e976dcc99483 >>

here is how the entire new dictionary looks for people with Deuteranomaly (which is the most common type ~5% of total men has it)

https://momcode.io/lab/ >> list #225b

Glad you liked it, even more glad you immediately acted upon it!

I think it would be super awesome if you focus on that in future.

Yes. I thought that ENS is a good solution to this general problem. After all, email addresses are readily recognizable.

@Cygnusfear @drhus @gluk64

I was working on something similar the other day:

It’s still a work in progress. Somebody proposed adding checksums: Bijective Mnemonic Phrases for addresses, hashes, etc

Maybe it can inspire something new.

Also relevant to this topic:

As a first step, I don’t understand why wallet addresses can’t at least start with the currency code: BTC1Co7WbQJzesUgLuQtr7bFWEUfnDFuBS2nm or ETH0xa5b7d615c99f011a22f16f5809890ca6911200a3, etc. so that new users to crypto have some easy way of making sure they’re sending the right currency to the right address.

Very well done @osolmaz this is useful for matching and comparing as well as for transmitting addresses over the phone, (btw have you considered the odd/even error detection scheme, similar to PGP word list cc @gluk64? this would be really useful!)

@Cygnusfear Emoji checksumming is  but as visual checksum only, and I can’t envision several use cases? possibly for etherscan like your chrome extension, however, I still prefer your color highlighting approach.

but as visual checksum only, and I can’t envision several use cases? possibly for etherscan like your chrome extension, however, I still prefer your color highlighting approach.

I’m working on an appealing Square/Circle visualization of momcode to test for identicons…

actually Prefix with separator " : " is supported by most wallets!

Bitcoin:1A1zP1eP5QGefi2DMPTfTL5SLmv7DivfNa

Ethereum:0xBB9bc244D798123fDe783fCc1C72d3Bb8C189413 << this URL shall open with wallet directly if you’ve one installed that support prefix.

and some addresses format has explicit prefix structure like XRP addresses start with " r " as rHb9CJAWyB4rj91VRWn96DkukG4bwdtyTh, EOS start with " EOS " as EOS8RTr8MFSP37tUQCZspPCDpyphed9noU6zTKjqHh93U2DThjdVr and CashAdd of BTC start with " q " as qpm2qsznhks23z7629mms6s4cwef74vcwvy22gdx6a litecoin L etc

With 1024 emoji you can do something similar to the bijective mnemonic @osolmaz proposed (for example: https://github.com/keith-turner/ecoji), but with icons (so you can communicate the icons verbally). Emoji are supported by most chat applications so there’s native support for the visuals.

The 3 emoji checksum is not bi-directional. With a 1024 emoji list you can get a public key down to 15 characters and still allow decoding. Biggest issue here is similar emoji. Probably should implement a last character that allows the decoder to checksum the input.

address: '0x03236033522cdCBaC862afBeb56a951649082b78',

emoji: '☀️ 👨 ☀️ 😄 🆎 🐅 📫 Ⓜ️ 📷 🛫 🎈 📐 ⏮ ⛲️ 👁 😩',

text: 'sunny man sunny smile ab tiger2 mailbox m camera airplane_departure balloon triangular_ruler black_left_pointing_double_triangle_with_vertical_bar fountain eye weary'

Of course there is a relevant XKCD for this: https://www.xkcd.com/936/

Considering people traditionally remember narratives easily, “Plane taking off to the sun” etc. I’m imagining a lookup table of narratives.

Having background colors for each row is an option, but it’s not so elegant.

Interesting reference:

@drhus I want to add error detection. Using the even/odd scheme would save us from having to add an extra word to every mnemonic and save space.

There is another issue addressed by @fubuloubu

I quite like it actually! I really think a different set of words than BIP39 would be required. I cringe thinking about someone inadvertently copy/pasting their seed. I know you design it against that, but it WILL happen lol. At least an input field can reject the word list as invalid (although sizes do the same thing)

So for public stuff, we need to come up with a different set of 2048 words. If we want to introduce the even/odd scheme, that would be 4096 as far as I have understood. That’s a lot of words to glean on one’s own.

I scraped some sources and started to make my way down to the list. Here is the HackMD in case anyone wants to help:

https://hackmd.io/HWQKqp-ES_iw2kON_znEEA

I obtained the list by scraping sources and removing words that are in the BIP39 list. There are errors, declensions of the same word and words with similar spellings that need to be picked out. I think once picked out, we will end up with ~1600 words, so I will have to repeat the process.

Also, how do you think we should do the checksum?

@Cygnusfear

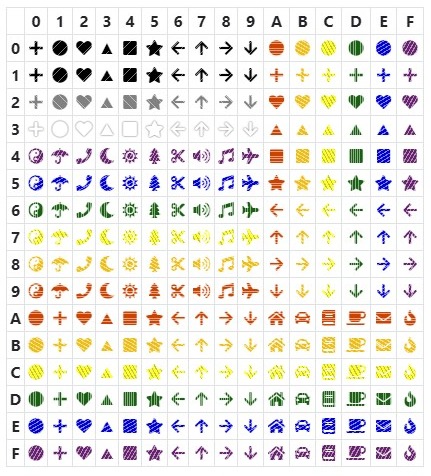

The question is the base / size of your Encoding Dictionary

Q. Encoding 20bytes / 40 char. would you use a pool of

base1024 with 1024 Emojis encoding the address in 15 emojis // https://github.com/keith-turner/ecoji

base 256 with most used/recognizable 256 Emojis encoding it into 20 emojis/symbols, // https://momcode.io/lab/?list=4Fun%20-%20Emoji-Random&input=0xf1c337be8df3d49f752b71b4b28b0787716bfd16 the list called “4Fun - Emoji-Random” (still building Emoji frequency list)

Or use 16-32 symbols with 6-8 colors encoding 40 char into 20 symbols which is the concept behind Momcode (it is base 256 as well 1 byte = 1 symbol further using colors (+/- underscore) we reduce dramatically the size of enoding dictionary symbols pool…

The bigger the base (and the size of your encoding dictionary) the less easy/memorable/transmittable/describable its constituents -symbols-, and shorter output.

btw you can go all the way to Base 65536 (Unicode) and encode the same 40char /160 bits wallet address in only 10 Unicode codepoints. but that is a terrible idea for wallet address (or anything else maybe)

Back to original question, is it better to encode from a dictionary of colorful most used 16-32 symbols to express a 40 char wallet address in 20 symbols, or add 1000 symbols (emojis) to the dictionary and reduce the size from 20 to 15?

First of all i’m not sure i understand why you need to increment the list to 4096? you shall be able to encode the 20-byte address in 16 words, with Even/Odd out of BIP39 word 2048 list >> Wallet Shape Address Human-friendly Visualization of Wallet Address : Momcode

I can’t think of use cases that required the complexity of proper extra bytes checksum for this, I’m more keen to even/odd simple error correction (which allows for brain manual decoding).

I just had the chance to read @gluk64’s proposal and see that we were not on the same page. Now I get it.

As far as I understand, a PGP word list like scheme would protect against

and not

AFAIU the first 1024 words would be for even and last 1024 would be for odd. The bad thing is that we lose the two-three syllable pattern which lets the listener know immediately something is wrong. If we wanted to build such a list, we could have a hard time finding words easy to spell and pronounce for laymen. (Also, I still think we should use separate word lists for public and private stuff, which would make the task even harder.)

What I was suggesting: Don’t partition the bits like @gluk64’s proposal, and treat the whole thing as a number. If we use a check word at the end, we get away with 15 words + 1 check word.

I propose something like ISBN-10. 2053 is nearest prime to 2048, we could add 5 more words to the list to be used only in the check word. Then we would have a scalable error-detecting scheme that not only works for 20-byte addresses, but for numbers as big as 2048^2053. It would detect 100% of transposition and mistyping errors.

Slight disadvantage: implementations will have to use big integer libraries (if integers not bigint by default like in Python)

Do let me know which one you think is better and why.

I took a second look at BIP39 and came up with a way of reducing the number of words to 14 by using a list of 4096 words, and doing the checksum with hash functions like in BIP39. It’s in the same page I posted before.

I have a question, it may seem a bit unrelated and I’m sorry, I’ve been asking this all over… but it’s an idea I’ve been thinking on for a while. I’m no expert as some to you will come to see in my somewhat crude question and the fact I use a bitcoin library to present the question, I have no bias, just more familiar with the library, but bear with me.

This is a bit of a general question, knowing already of HD wallets and the mnemonic system for creating, backing-up and using the bitcoin blockchain.

However say you have for example a cold/paper wallet, with just one Bitcoin Private Key.

Say I use bitaddress.org’s code to securely generate a paper wallet. Then import it using the library I used above, and export it to it’s integer wallet format, and I turn the resulting integer into an exponent of whatever number that’s exactly equal to the exported integer value. Say its 10^98, that’s pretty easy to remember, and I generated securely no?

Wouldn’t an easier way to back-up/save/remember that address be simply be figuring out where the key lies within the range of possible bitcoin keys and taking note of the exponent of said number?

The private key below is generated from the number 2^65

from bit import Key

# The exact position of this key is 2^65 in int form: 36893488147419103232

privKey = Key.from_int(36893488147419103232)

# or simply

privKey = Key.from_int(pow(2,65))

print(privKey.address)

print(privKey.segwit_address)

this output the addresses: 1LgpDjsqkxF9cTkz3UYSbdTJuvbZ45PKvx and 3FC7umZDWPvTskbVwG7mn72M8RtW8yFSy7 for segwit. All I’d have to remember is just that exponent to import this private key for example…