I might be totally off topic here, but as far as I understand, the motivation of this ongoing work is to enable more localised, decentralised ethereum network infrastructure as general challenge ecosystem faces.

What stumbles me often in these EF discourses, is how much abstract, mathematical thinking is there as opposed to physical telecommunication real world.

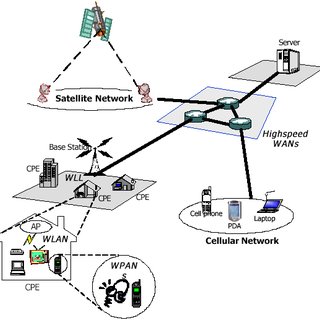

TCP networks do not look like “client ↔ server”. They look (very simplified) like this:

In initially linked articles network throughput is put under concern, however no analysis of what is physical topology demand is for such throughput.

It’s also claimed that

However this problem statement does not include detail that indeed it is not the technical difficulty that faces bottleneck. It’s a financial incentive and return on investment difficulty to justify totally tangible and feasable technical requirements. Ethereum hardware requirements are not TON alike where you need a datacenter rack to launch a node.

Challenges are in i) financial incentive of doing so (low APY plus increased broadband internet, static IP costs) ii) difficulty (cost) of obtaining static IP address from my ISP iii) lack of competitive market of ethereum node oriented hardware (dappNode with $2k cost bill is a lol).

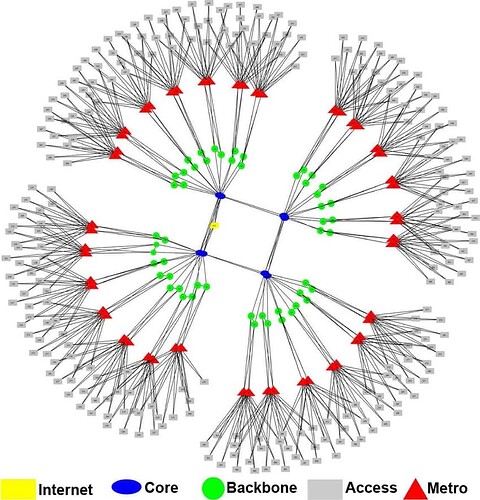

Further, in local RPC serving nodes proposal here, assumption is that clients may want to store partial data. While I agree with that, If we take in context that we knowing how network topology of ISPs looks today:

Then we are still missing this analysis of throughput demand. Is it really uniform (transactions happen with equal probability between two randomly picked end-clients)?

I doubt it. I’d rather bet on that majority of settlements requiring high throughput are actually happening between local access points (end-users) doing their settlements.

If majority of traffic is localised then, candidates to run such “partial” clients are ISPs, last mile carriers, telecom companies who should be able to provide near instant settlement for transactions for network clients & contracts that are subset of their underlying client base and then slowly settle them to main ledger (and how it plays with rollup-centric stuff?).

And that’s my point - they have no problems running 4TB SSD. They have static IP addresses already (!). They have specialised hardware companies and expertise that can enter market and create competitive hardware pricing.

In many aspects, last mile carriers are much better candidates to reinforce network decentralisation and localisation.

The problem is that they don’t see incentives in doing this.

If I’m running local node, there must be way of earn high yield on being able to settle end-user transactions quicker then others or for relaying that requested data to end user (and here partial state can be though as distribution network caching layer).

That gets me back to article on BFT-PoLoc paper I linked. If my local node can proof that it is able to do fastest settlement between two network clients, it should be able to take that opportunity at risk of already present stake it has.

Then you get both win-win: network scales as crazy as that’s what users wants (instant secure and cheap settlements), and spatial decentralisation is achieved (data centers located remotely from user locations naturally cannot win settlement profits from ISPs or last mile carriers if market is latency based).