Read the original article:

https://www.nature.com/articles/s41598-023-47219-0

key Message:

Compared to static tools with fixed rules, deep learning doesn’t rely on predefined rules, making it adept at capturing both syntax and semantics during training, learning vulnerability features more accurately.

Main Results:

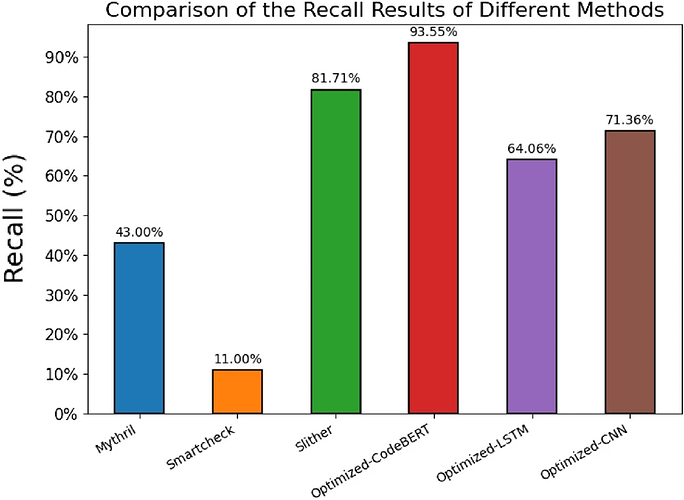

The proposed Optimized-CodeBERT model achieves the highest recall compared to these static tools.

The proposed Optimized-CodeBERT model outperforms other deep learning models, reaching the highest F1 score in comparison.

Improved the model’s performance in vulnerability detection by obtaining feature segments of the vulnerability code.

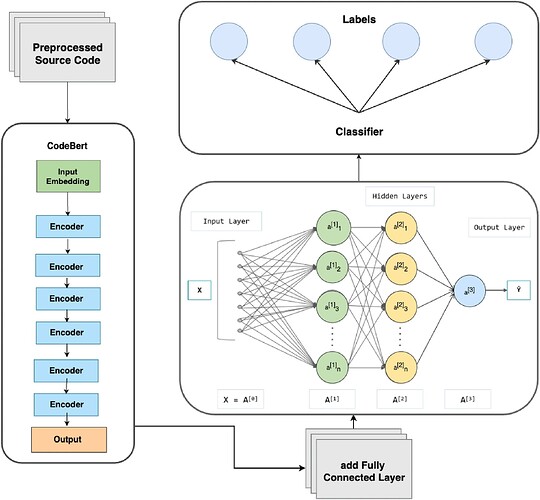

The CodeBERT pretrained model is employed to represent text, thereby improving semantic analysis capabilities.

Methods:

Study type: Experimental

Study aim: Exploring the detection capabilities of deep learning models in smart contract vulnerability detection, assessing whether they outperform traditional static analysis tools.

Experiments:

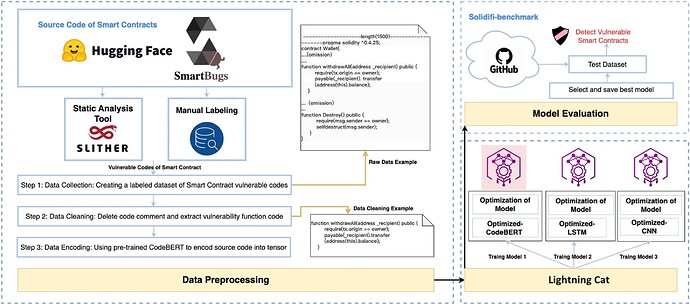

A solution is introduced which is based on deep learning techniques. It contains three models: Optimized-CodeBERT, Optimized-LSTM, and Optimized-CNN.

Training with feature segments of vulnerable code functions to retain critical vulnerability features.

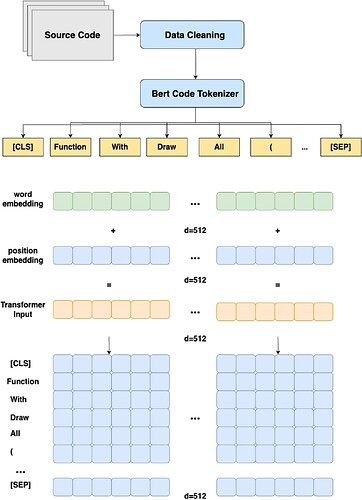

The proposed Optimized-CodeBERT pretrained model for text representation in data preprocessing.