I believe this footnote addresses your question, indeed the burn may shift up the real yield, but that shift is constant across the whole real curves, so does not change the relative quantities and thus the arguments of the post.

I’d like to share my perspective as an average Home staker to provide a more down-to-earth example :

If issuance approaches zero or goes negative, I’ll continue staking out of conviction and also because I don’t want to make the “effort” to withdraw my validator. Most home stakers, like me, want to avoid unnecessary entries or exits of their validators. This benefits the network.

However, if negative issuance persists, I’ll be forced to withdraw because it’s not sustainable for me in the long term (I will no longer have ETH at the end so it’s better to exit beforehand)…

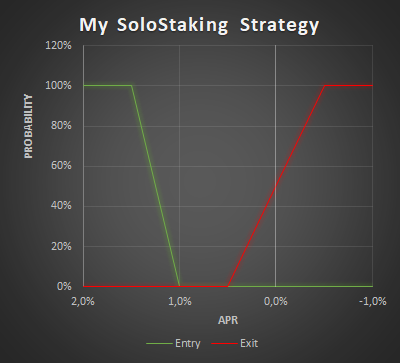

This creates a re-entry barrier, necessitating the redeposit and setup of my validator again, an effort I won’t undertake if the low APR remains. I’m not a machine; I won’t cyclically enter and exit, as it demands effort and entails risks. I’ve sketched my stance on a graph to illustrate the gap between the my APR thresholds for exiting and re-entering. (The figures are quick approximations and vary among home stakers.)

This simple graph demonstrates that if the APR falls significantly low, I will not consider re-entering if it persists in that range, effectively establishing a ‘no-return buffer’.

It suggests that pushing issuance toward zero or negative risks driving away home stakers not out of conviction but due to re-entry barriers.

However, I believe there’s an equilibrium APR before reaching 100% stake. Solutions like MEV burn and reducing issuance seem simpler and quicker to achieve this equilibrium at a lower ETH staking ratio.

I don’t think it’s necessary to implement a complex targeting system, as I don’t believe (or hope) we’ll reach 100% of ETH staked. However, if implemented, I think it could cause side effects for home stakers.

First of all, great job. I read most of this proposal very carefully, and I agree with most of the points brought up in it. I also took to social media as to support the proposal and to address some of the popular criticisms:

- Some do simply not acknowledge the value of ETH as money: x.com, or they question the seriousness of the proposal (x.com), which I find incomprehensible.

- Some of the criticism seems to be without substance: x.com or it misses the key points of the proposal, namely that LSTs challenge ETH’s money-ness: x.com

- There is a class of criticism that entirely misses the point as it assumes the SEC would monitor the internet to draw conclusions on whether ETH is a security based on what is written in this forum: x.com Even if this was the case, why would it matter when engineering the protocol and addressing its challenges outlined above? The SEC is not the primary customer we’re working towards to build the protocol.

- Everyone who holds ETH should be more aware of how incredibly valuable it is for an asset to have a monetary premium. Educate yourselves here, for example https://www.aier.org/article/on-monetary-premia/. Everyone who has to pull 17 magic tricks a quarter to preserve their wealth’s value over time is intuitively aware of how valuable it is to JUST hold a true store of value. That should be the vision for being an ETH holder. Not to imitate the existing financial system where I have to cast 23 different spells on my fiat money such that it doesn’t insta depreciate, but where I can buy ETH and know that the network’s productivity (that goes far beyond just staking) takes care of preserving the value.

Then, this aside, I picked two quotes from the article:

To the authors, I would emphasize immensely this part of the proposal, which I think is counterintuitive for many ETH and LST holders. If I understood correctly, then the punch line here is, in reality, that with more staked ETH receiving yield, ETH inflates more in absolute terms, which means holding ETH becomes even less economical.

I think printing a curve showing the number of staked ETH vs. how high absolute inflation is could help to explain this or further understanding. E.g., I’d be interested in how much ETH is being printed when, e.g., 60M ETH is staked at 2% vs., e.g., 30M ETH at 3%.

I actually think a part of the requirement here should also be that “holding raw ETH is simply like holding money,” as to say that it should be straightforward to hold money and not require me to do 17 tricks to hold the actual money and not a derivative that is actually inflated away by someone who more sophisticated than me.

This, in turn, also means that we should see staking rewards merely as the counter good for providing a service and paying expenses for that service. And in that line of arguing, I’d like to say: Motivationally, I’m pretty sure that most validators already hold ETH for its money-ness or as an investment. ETH has also been a great investment, especially in a fiat currency environment where all value globally is rapidly inflated. So, we can hardly argue that we should pay ETH validators for the risk of holding >= 32 ETH. So then we actually pay them for an active internet connection, a computer, the amount of time they spend on maintenance, and the risk of participating in validating. And what is that cost? I doubt that it is, in reality, so high that barely anyone does it economically. I personally know of people whose non-technical family members also got a computer to stake ETH because it was apparently such easy money! WTF

If you think about how you have to put your fiat money into an ETF that then spends it on 500 US-based companies and has this incredible black-box complexity, all just to preserve the fiat money’s value over time, then this is the inverse of what we should be aiming for, and sadly, this is the direction we’ve been going towards. So I support this proposal! Make holding ETH economical and preserve its property of being money.

For targeting staking ratios we should use a PID controller.

GEB controller seems robust enough, is doing a great job in RAI and related forks. It intakes a target and sets the rates accordingly. Plus, it’s very well documented and in-prod tested, with community tweakings.

I’d like to know what other ideas were discussed and dismissed besides yield issuance. I think the community is focused on yield over new ideas, I think there are many options that are worth exploring as well.

What ideas do you have? I’ll go first to spark some inspiration:

-

Reduce new validators. Further, reduce the limit to 1 new validator per 6.4-minute epoch. This could be done dynamically as the stake increases. If significantly reduced, it would make building a large node operator uneconomical due to the time needed to get validators online and larger staking pools end up with less yield as they share the yield with users ETH that is not yet staked, which gives home stakers a better yield option.

-

Do nothing. The market settles, and staking deposits settle down.

-

Enshrine an LST, control the community, and stop bad actors from controlling the protocol. This is a controversial option, given the investment and time that organizations have put into staking pools.

-

Dynamic max deposit amount. Research an acceptable amount of economic security and design around that. Price and volume deposited dynamically managed.

-

Randomized Yield: Implementing a system where rewards vary randomly between 1% and 6% could discourage professional node operations due to its unpredictability

-

Non-Yield Staking: Stake accumulation could be tied to gas fee usage instead of providing a yield. This might encourage home stakers but doesn’t incentivize broader participation.

-

Increase the gas fee to the deposit contract to prevent the economy from stacking up.

-

Correlated attester penalties: This aims to penalize big stakers; it would be interesting to model this. for more info see a post by Vitalik.

It’s an interesting topic, however there is something puzzling me and I’m curious of hearing your thoughts.

It seems the main concern is around LST. However, by targeting a staking ratio, are you not going to exacerbate the preference for adopting a LST solution? What I mean is that, the way rewards are distributed to validators tend to follow skewed distributions - essentially, larger entities tend to earn more on a percentage basis. This is mainly due to the fact that larger entities are pooling rewards coming for long-tailed distributions. Thus in the end, it would always be more profitable to pool rewards, so joining a LST solution.

In other words, how can you be so sure that targeting a staking ratio doesn’t tend to make things worse?

I think this is a solid observation. But, it is also important to consider that there may be other reasons to operate a node beyond economics, or that new technology may develop to make the model more economical. Generally this happens often, where new technology could improve the cost-efficiency of operations.

This I really agree with. But, I think you are right, though it may be controversial.

I like this idea a lot. The randomness could discourage professional node operations as you mentioned, which would certainly help decentralized the network. I think this idea is really innovative and could really improve things. Nice one.

Overall, nice post.

Ansgar and Casper’s talks at Devcon were pretty interesting and have inspired me to do some napkin research on this topic. I agree that there is a problem with the incentives, but the solution seems to have some major problems:

- This will affect existing stakers (rugged!)

- Choice of parameters feels random. Because of 1, this feels political.

- Staking yield goes below zero. It seems to me there is an unintentional attack with restaking, where past a certain point yield is negative but it is still profitable for restakers. At that point, non-restakers are forced to either restake or get out, resulting in 100% of staked ETH being restaked.

My quick and dirty idea introduces two new parameters:

- MTS: A targeted maximum total staking percentage (something between 29% (the current total stake according to https://dune.com/hildobby/eth2-staking) and 100%).

- TVS: A targeted maximum validator share (something between 32 ETH-33% of the total stake).

The idea is that once the total ETH staked reaches MTS, the issuance curve is set such that any entity with at least TVS staked has a marginal reward of 0 when adding another validator.

For example, let’s say MTS is 50% and TVS is 0.2%. When the total ETH staked is below 50%, the issuance curve remains the same as it is now, receiving approximately 2578 ETH/year. However, once 60 Million ETH is staked, any validator with 0.2% of the stake or more still will only ever receive 2578 ETH per year no matter how many new validators they spin up.

I have a spreadsheet of the proposed issuance curve here: Staking Endgame.xlsx - Google Sheets (Hopefully no major mistakes, I know there are some visualization bugs if the parameters are set outside reasonable ranges).

This design has the following benefits:

- As long as MTS is set above the amount staked at the time of the fork, there is no disruption to existing stakers.

- Yield always remains positive. This is beneficial because I think if yield goes negative at some point, restakers will be able to drive everyone else out until 100% of staked ETH is restaked.

- As an added bonus, this adds an incentive against a single party having control of too much stake (note that single entity doesn’t refer to something like a staking service, which wouldn’t be affected).

- The design decisions are more or less neutral. Only MTS and TVS are sort of random, but I think choosing credibly neutral values shouldn’t be too hard. In any case, because of point 1 (and because there should be collusion between large stakers to avoid reaching MTS), these values shouldn’t be that political.

- The resulting issuance curve puts a theoretical cap on ETH issuance, which is great for the ultrasound money meme.

Downsides:

- The yield curve becomes discontinuous (not sure about the implications of this). If this is really a problem, you could split MTS into MTS1 and MTS2, making marginal staking yield for TVS sized validators zero only after MTS2 is reached by linear interpolation from MTS1 (nice, now you can use both 30% and 50% instead of trying to decide between them for MTS).

- It’s possible that ETH staked exceeds MTS without any validator reaching TVS. I don’t think this is likely as long as some analysis of the distribution of ETH across addresses is done to choose TVS. In any case even if this occurs there is still the benefit that it increases the decentralization of staked ETH.

- It’s unclear how restaking yield will interact with this design. Possibly you might want to make the values for MTS or TVS to be more conservative than idealized values.

Adjusting the staking reward rate is not a rug… all staking rewards are paid for by the network. Any reduction in issuence is offset by the benefit of reduced dilution.

In this scheme, how do you prevent against Sybil attacks? It sounds like I can easily defeat the TVS condition by just spinning up a new entity.

TVS is an economic parameter, not something that targets any individual validator. The way it works is that past MTS, issuance actually starts dropping. As a result, the added ETH from any new validator is offset by the reduced yields from their existing validators, resulting in zero marginal yields. Anyone over TVS actually gets negative marginal gains, while anyone below TVS still has positive marginal yields. This is why I think this proposal actually also has benefits to stake decentralization. Another key point is that this economic incentive applies to LST holders as well.

Re Zarevoks: rugged. That was sarcastic, sorry. I understand the argument on dilution, but I don’t expect everyone to.

I’ve commented this elsewhere but I think it’s applicable to this post as well. You seem to be a mix of points 2 and 3 below which I argue are not convincing in the linked post. In particular for LST dominance I’ll see if I can post about my thoughts on how we can keep native eth competitive with LSTs soon without messing with issuance.

To avoid the feeling I think a number of people get that these are just your preferences and somewhat arbitrary I think the need for introducing MVI itself needs to be very explicit and I don’t think a high-level and clear need has been defined. I think I’ve seen 3 high level needs for why we should pursue this articulated (I’ve addressed them in my replies already but just to make things concise):

- Large Validator Set Network Instability (a tech problem that already has some reasonable approaches to potentially solving)

- Reducing LST Dominance (I still have no idea why an LST can’t dominate even with more limited issuance)

- Avoid paying too much for security (extremely subjective what too much security is)

If you can articulate a higher-level need that is really driving a change like this that has a massive impact on every network participant I’d be open to hearing you out. I don’t think I’ve seen it yet. And getting lost in the minutia of which curve specifically to choose with extremely detailed long posts isn’t going to help move the proposal forward imo.

Can I suggest that the main problem is the focus on defining a single yield curve for all validators?

For any given yield curve, there will be an optimized validator type that has a competitive advantage against others, leading to a concentrated validator set in the endgame. To solve for validator diversity, you actually need to have multiple yield curves with varying tradeoffs such that different validator types can each find a niche position in the ecosystem.

As an example, If instead of a single yield curve, you introduce 2 yield curves, one for validators that can exit at will and one for validators that have locked their stake for a given time, you can force diversification of the validator set. there will be a set of validators that will accept a low yield as long as they can exit immediately, and a different validator set will exisit that is willing to lock their stake for extended periods for a more stable or higher yield rate.

Centralized entities that need to meet withdrawal requirements for their customers might be forced to have access to immediate withdrawal, while solo stakers might be willing to lock up stake in exchange for higher yield.

This is similar in concept to government bonds. There is no single bond type offered by the government, but rather a multitude of different bonds (30 yr, 10 yr, inflation protected, etc) which each attract a specific type of investor with a unique risk profile.

No single issuance curve will be agreed to by all validators, but introducing a range of curves with different trade offs would likely grab more support as each validator type would find a niche.

What is the benefit to the protocol for having some validators with longer lockup requirements?

I would assume a stable validator set with longterm validation gaurantees would be a net benefit to the security of the protocol. Depending on the exact levels you could also save on eth inflation in certain scenarios.

But the main benefit from my perspective is more to force diversification of the validator set. I think it would be valuable to diversify the validator set in this way even if long term locked stake had no direct benefit to the protocol.

What leads you to this assumption?

It is not clear to me why there would be a benefit to the protocol in having a diversity of lockup lengths. Diversity isn’t useful just for diversity’s sake, we often want diversity because it gives us robustness in some cases, but I don’t see how this would increase our robustness.