By Professor Aleksander Kuzmanovic & Eleni Steinman @ bloXroute Labs

At bloXroute we have spoken to many in the community about safely increasing the gas limit and have created this post to encapsulate those conversations and continue the discourse.

Note: We were limited in the number of included hyperlinks and used additional spaces to trick the system.

How do we know the gas limit can be increased safely?

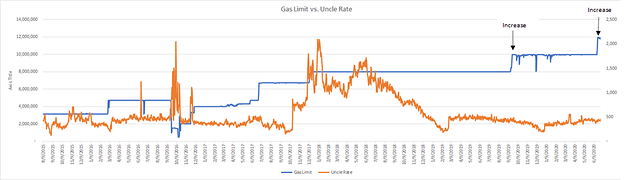

In July, the total gas used on the Ethereum blockchain reached a new all-time high after Ethereum miners voted to increase the block gas limit by 25% (from 10,000,000 to 12,500,000). While our data implies that the recent gas limit increase is modest, and that a much higher gas limit can be deployed, we argue that (1) incremental gas limit upgrades are necessary to allow sufficient time to assess the effects of modest upgrades and (2) yet in absence of negative side effects, such modest gas increases can be conducted more frequently.

Through our close work with many of the mining pools, we can further see that the network is healthy and can handle a higher volume of TPS then we are currently seeing as bloXroute operates at the networking layer. There was no statistically significant increase in the uncle rate after the gas limit was increased by 25%. This is also true after the 25% increase in September 2019 (which occurred in conjunction with bloXroute going live).

Data from Etherscan.io

When two different miners mine two different blocks at roughly the same time, these competing blocks cause a fork in the blockchain. Once a new block is mined on top of one of them, those blocks become the longer chain and the other block is discarded and will only be referenced as an “uncle”. When forks occur, we measure the delta between the two blocks. We break those forks into 50 ms segments, which indicates how long it takes for miners to hear of a new block.

Image: //imgur .com/a/G1z8mnd

We can see that almost 20% of miners move to mine the next block within 100 ms (0.1 sec) after a new block is mined, and it takes about 0.6 sec for half of the miners to start mining the next block. Lastly, almost all (90%) of the hashpower is already working on the next block within 1.5 sec.

This should quiet the fears of those concerned that increasing the gas limit will break Ethereum as we’re not seeing a significant difference in uncle rates and propagation times before and after the gas limit increase when such networking optimizations are implemented. The key is to minimize such risk that increasing the gas limit will break Ethereum by taking incremental steps, like we have seen this past year, to increase the gas limit.

How bloXroute optimizing the network?

Blockchains today are at a similar place where the Web was 20 years ago — in its infancy and with the scalability problem unsolved. In a similar way Akamai solved the problem for the Web, by propagating data more quickly across the Internet, so does bloXroute today, by providing a technology breakthrough to propagate data throughout the blockchain P2P network.

In particular, bloXroute deploys a blockchain distribution network (BDN) that helps all blockchain nodes propagate transactions and blocks quicker and more efficiently. Both Akamai’s and our architectures actively push (propagate) content across the Internet, and cache (store) content on its servers in order to serve users better, i.e., with a larger throughput and a smaller latency.

It is such network optimization that allows for a higher gas limit and we expect to see further improvements in the future, both from bloXroute and other actors.

Arguments against increasing the gas limit

Some in the community have proposed concerns about increasing the block gas limit at all. Below we address such concerns.

- Larger blocks increase the time to sync a new node

The time to sync a new node will keep increasing even without any changes to the block size. Hence, this problem needs to be addressed independently. bloXroute’s BDN provides a viable solution: instead of downloading data via a potentially low-bandwidth distant peer in a p2p network, a new node can download data from a nearby BDN at a high rate. While a node can be bottlenecked either by network or by processing transactions, bloXroute’s experience (corroborated by our clients and partners) is that a bloXroute-supported node syncs much faster than a “regular” node. Hence, the networking bottleneck is more dominant in practice. There are no centralization concerns with this approach, because a user can always independently verify that the content downloaded from the BDN is valid.

- Increased block computation time will prevent older, slower nodes from staying in sync and will increase sync time for new nodes

Another concern being voiced is that increasing the gas limit won’t allow full nodes to process new blocks fast enough to keep up with the rate of new blocks arriving, and they will be effectively thrown off the network.

However, a full node running on a commodity PC can usually process a block within 0.5–1 second. Since new blocks arrive every 13 seconds, on average, we have a 10–25x multiplier before this becomes an issue at the current hardware. Given that hardware also improves over time (as an example, Samsung’s SSD EVO improved by 5x since 2017). I don’t see this ever becoming an issue, but it is definitely not an issue now.

- Increased gas limit will increase DOS vulnerability (See Broken Metre: Attacking Resource Metering in EVM @ https:// arxiv .org /pdf /1909.07220.pdf)

The key source behind the above DoS attacks is an inconsistency in the pricing of some instructions, which lead to DoS attacks in the form of low-throughput contracts. As such, this issue is orthogonal to the gas limit, and the solution lies elsewhere, i.e., in appropriately adjusting the gas cost for given instructions. Short- and long-term solutions to this problem are outlined in Broken Metre report.

Additionally, Vitalik recently created a new EIP to mitigate DoS attacks in EIP 2929 of which “a secondary benefit of this EIP is that it also performs most of the work needed to make stateless witness sizes (https:// ethereum-magicians .org/t/protocol-changes-to-bound-witness-size/3885) in Ethereum acceptable” (https:// eips.ethereum .org/EIPS/eip-2929). In discussions with Vitalik over the past 2 years ( lastly at Stanford’s SBC2020), he had been consistent to argue that gradually increasing the gas limit is viable and that stateless clients are more than practical at this point.

- Increased gas limit may increase chain and state size growth

A concern for all layer 1 chains is how quickly the size of the blockchain is growing (chain growth), or the chain state (the number of active accounts and all their data.), called the State Size Growth. Before a full node can join the Ethereum network, it must sync the entire history of the blockchain; the longer that history is, the more data there is to store, the more time it takes to sync and the higher the cost to store the data. There may arise other implications from state size growth such as memory utilization or client performance, but these issues should be addressed as they reveal themselves.

Many argue that increasing the gas limit would affect the chain-size growth — larger blocks mean the amount of data that needs to be stored grows faster and the problem is exacerbated.

However data shows that increasing the gas limit does not translate to higher chain growth. On September 1, 2019, the Ethereum miners increased the gas limit significantly — by 25% — from 8M gas to 10M. You would expect to see a jump in the rate of state size growth — but we don’t. (Source: https: //blockchair .com/)

How can that be? Because more gas doesn’t necessarily mean more data is stored on-chain. Gas can be used to transfer wealth, computations, loading of data, etc. Storing data is just one of the things gas is used for. If that extra gas is used by DeFi smart contracts which use a lot of gas to compute trading pairs prices, for example, it won’t affect the state size growth.

Similarly, it has been demonstrated (see https: //medium .com/@akhounov/is-ethereum-state-growing-faster-now-and-ethereum-state-analytics-project-97777ab47af) that the state growth does not always correlate with the gas limit.

- Increased reliance on bloXroute is a move towards centralization

bloXroute’s BDN does not replace the Ethereum p2p network, but rather improves it. The p2p communication is still necessary because in certain scenarios it still can provide a faster path, and in all scenarios it can be used to verify the correctness of bloXroute’s BDN. Another related question is whether everyone needs to connect directly to bloXroute in order for it to provide benefits. The answer is no. bloXroute is already connected to many miners and clients. Hence, even those who are not directly connected to bloXroute’s BDN, are pretty close to it, because their immediate peers are very likely directly connected to bloXroute. Lastly, by design, bloXroute introduces open-source backup-nodes, idle nodes which can be operated by any interested party or stake holder wishing to ensure fast block propagation, e.g., miners, validators, and pools. If bloXroute maliciously rejects a transaction, said transactions would be broadcasted to all the gateways by the backup network. Furthermore, if bloXroute were to provide inconsistent updates or suffer from a system-wide failure, it would be replaced by backup-nodes for any amount of time necessary to find a remedy or a replacement.

- Blocks could become much larger than they are now (perhaps 10x) even if the gas limit does not change, if rollups start generating much more calldata. These larger blocks might increase propagation time and uncle rate. Increasing the gas limit on top of this could make the problem worse.

If blocks could become much larger than they are now (perhaps 10x) even if the gas limit does not change, due to rollups, then increasing the gas limit is not the key source of the “large block” problem. Rollups are an order-of-magnitude bigger problem. Yet this means that we have to find a solution to handling blocks that are 10x larger than they are now. bloXroute is such a solution, today. Only network cashing, deployed by bloXroute, reduces the effective block size by at least 50x.